Meta Description:

Explore the ethical implications of emotional manipulation by AI in sales. Learn how businesses can balance personalization and persuasion without crossing moral boundaries.

Introduction

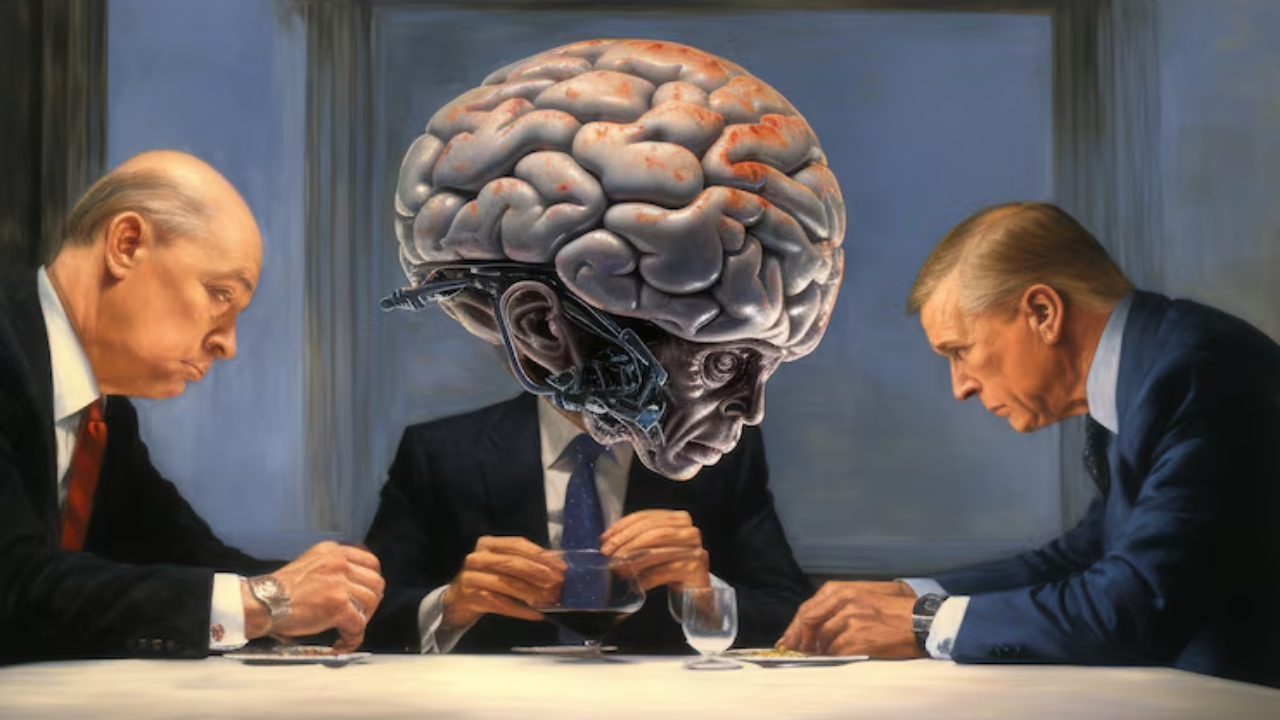

As AI continues to revolutionize industries, its role in sales and marketing has become more powerful—and more controversial. With the ability to analyze customer emotions and behavior in real-time, AI systems can now tailor messaging with pinpoint precision. But as businesses tap into these emotional insights, an important question arises: Where do we draw the ethical line between persuasion and manipulation?

What Is Emotional Manipulation by AI?

Emotional manipulation by AI refers to the use of data-driven insights to influence consumer behavior by targeting their emotions, vulnerabilities, and psychological triggers. Through sentiment analysis, facial recognition, and behavioral tracking, AI systems can predict and react to a consumer’s emotional state—often without their explicit awareness.

Examples include:

- AI-generated product recommendations during moments of emotional stress

- Chatbots using empathetic language to nudge decisions

- Algorithms that exploit fear of missing out (FOMO) to drive urgency

Ethical Concerns: Where Persuasion Becomes Manipulation

While personalized experiences can enhance customer satisfaction, the fine line between helpfulness and exploitation is often blurred. Here are key ethical concerns:

1. Lack of Transparency

Consumers may not realize that an AI is tailoring its messages based on their emotional state. This lack of disclosure can undermine informed consent.

2. Exploitation of Vulnerabilities

When AI detects emotional distress—such as grief or loneliness—and uses that data to promote products, it veers into predatory marketing.

3. Loss of Autonomy

Manipulative AI can reduce a person’s ability to make rational, independent decisions, nudging them toward actions they might not have taken otherwise.

4. Data Privacy Violations

Gathering emotional data from facial expressions, voice tone, or browsing behavior often occurs without explicit permission, raising serious privacy issues.

Why Businesses Should Care

Beyond potential legal ramifications, unethical emotional manipulation can damage brand trust and customer loyalty. Today’s consumers are more aware and critical of how their data is used. Companies that prioritize ethical AI usage can position themselves as leaders in responsible innovation.

Balancing AI Capabilities with Ethical Responsibility

✅ Use AI to Empower, Not Exploit

Design AI systems that support user decision-making rather than override it.

✅ Be Transparent

Let users know when AI is being used and how it interacts with their emotional data.

✅ Set Ethical Guidelines

Implement an internal framework to guide how emotional insights are used in marketing and sales.

✅ Obtain Informed Consent

Always secure user consent before collecting emotional or biometric data.

Looking Ahead: Regulation and Responsibility

As AI technology evolves, regulatory frameworks must evolve with it. Governments and industry bodies are beginning to explore standards around AI ethics, but companies shouldn’t wait for legislation. Proactively adopting ethical practices can serve as a competitive advantage and a safeguard against reputational risk.

Conclusion

AI has the power to transform sales, but with great power comes great responsibility. Emotional manipulation may deliver short-term gains, but in the long run, ethically designed AI will build stronger, more sustainable customer relationships. Businesses that respect emotional boundaries will earn not just conversions—but trust.