Meta Description:

Learn how to program AI to deliver ethical emotional responses, balancing empathy, privacy, and safety in human-AI interaction. Discover best practices and key principles in ethical AI development.

Artificial Intelligence (AI) is becoming more human-like, not just in what it can do, but how it responds. From virtual assistants and chatbots to therapy bots and customer service agents, AI is now expected to show emotional intelligence. But with great power comes great responsibility—how do you ensure that AI’s emotional responses are ethical?

In this article, we’ll explore how to program AI for ethical emotional responses, ensuring your systems are both effective and morally responsible.

Why Ethical Emotional Responses Matter

Humans are wired for emotion. When AI systems respond emotionally—whether showing empathy, concern, or positivity—they influence user behavior and feelings. Without ethical guardrails, emotional AI can easily:

- Manipulate emotions for profit (e.g., upselling using empathy)

- Cause psychological harm (e.g., insensitivity to distress)

- Breach privacy (e.g., overstepping by probing emotions)

Core Principles of Ethical Emotional AI

To create emotionally intelligent AI responsibly, keep these core principles in mind:

1. Transparency

AI systems should clearly disclose that they are not human. Users should understand they’re interacting with a machine, not a person.

Example: A chatbot introducing itself as a virtual assistant helps build trust and clarity.

2. Consent

Emotional data, like tone, sentiment, or facial expression, must be collected with user permission. Emotional profiling without consent is a breach of ethics—and often the law.

3. Empathy Without Manipulation

AI can recognize and respond to emotions without exploiting them. If a user is sad, the AI might offer support, but it shouldn’t use that sadness to sell a product.

4. Cultural Sensitivity

Emotional norms vary by culture. Programming AI to interpret and respond to emotions should account for these differences to avoid missteps.

5. Emotional Boundaries

AI should never overstep its role. It can be empathetic, but it must not give the illusion of deep emotional understanding it doesn’t possess.

Steps to Program Ethical Emotional Responses

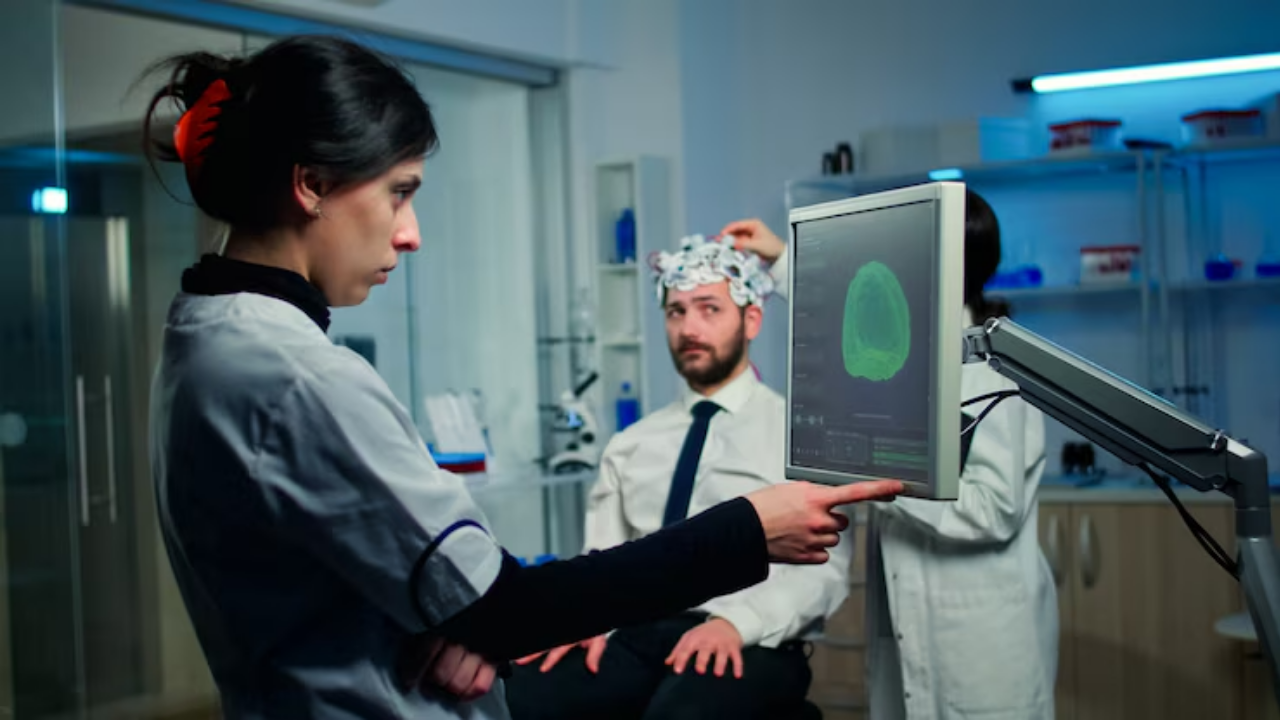

1. Use Emotion AI Carefully

Integrate emotion recognition technology such as sentiment analysis, facial expression recognition, or tone detection only where necessary.

- Use APIs like Affectiva or Microsoft Azure Emotion Detection.

- Keep models focused on surface-level emotions unless deeper analysis is truly warranted.

2. Train with Diverse, Ethical Datasets

Bias in emotional AI is common. Training data should be diverse, inclusive, and ethically sourced. Avoid datasets collected without consent.

3. Implement Response Filters

Create rules or filters to ensure emotionally intelligent responses align with ethical guidelines. For example:

- If a user shows distress: Offer supportive language or escalate to a human.

- If a user is aggressive: Stay neutral and offer de-escalation, not retaliation.

4. Human Oversight

Critical conversations—especially those involving mental health or sensitive topics—should be supervised or escalated to human agents.

5. Regular Ethical Audits

Schedule periodic reviews of AI behavior using checklists or third-party audits. This helps catch unethical patterns and adapt over time.

Examples of Ethical Emotional AI in Action

- Woebot – A mental health chatbot that provides cognitive behavioral therapy techniques without pretending to be human.

- Replika – An AI companion app that includes disclaimers and allows users to customize emotional engagement levels.

- Google Assistant – Uses polite and empathetic responses but avoids pretending to have emotions or consciousness.

Challenges in Programming Emotional AI

- Emotion Misinterpretation – AI can misread sarcasm, cultural cues, or complex feelings.

- Data Privacy – Storing emotional data creates risks for security and misuse.

- Unrealistic Expectations – Users might become overly reliant or emotionally attached to AI.

Conclusion

Programming AI to express ethical emotional responses is not just a technical challenge—it’s a moral one. By prioritizing transparency, consent, and cultural awareness, developers can build systems that are emotionally intelligent without crossing ethical lines.

The future of emotional AI should not be about mimicking humans perfectly—but enhancing human interaction safely and respectfully.